2011 Site Updates

Some links and images are no longer available in our older site updates. Additionally, some information is no longer applicable.

For the latest site updates, click here.

For the latest site updates, click here.

December 1st, 2011

It's that time of year again. The site has gone into sleep mode for the next 6 months. Some systems are operating on a reduced schedule for the off season. The recon system is updating slower and only checking HDOB and dropsonde messages. Some other changes have also been made. The old model system has finally been deactivated. After five years, the simple, yet demanding, system has been retired. The new model system, which was in beta testing, is now our official model system. It has performed very well this season and therefore I decided to retire the old system. There is only one behind the scenes error with our model system. On some occassions it leaves behind some old data when a storm is no longer active. (not in the public section, the server side) I still have not pinpointed that error. If I can't find it, I'll have to write a utility to run sometimes to delete the little bit of old data. Our model system is currently running every half hour and that will continue at least until 90L is finished. I will likely then slow it to updating every hour.

Work continues on other areas of this site. A redesign of certain areas, specifically the recon system, will continue throughout the off season. I'm not sure what final changes will be made yet as the current level of development is still in the early stages, though early testing is going well. The older recon data in the recon archive has still not undergone any review. That will likely occur sometime during the offseason. The changes I will likely make to the recon system will likely require reprocessing all of the data. I want to wait until I have made the changes I plan to make. There is still a lot of work to do and until I get even deeper into the testing, I'm not sure what will come of it all.

Work continues on other areas of this site. A redesign of certain areas, specifically the recon system, will continue throughout the off season. I'm not sure what final changes will be made yet as the current level of development is still in the early stages, though early testing is going well. The older recon data in the recon archive has still not undergone any review. That will likely occur sometime during the offseason. The changes I will likely make to the recon system will likely require reprocessing all of the data. I want to wait until I have made the changes I plan to make. There is still a lot of work to do and until I get even deeper into the testing, I'm not sure what will come of it all.

October 31st, 2011

For nearly two months I have been adding historical reconnaissance data to the recon archive. The data has been added but the data needs to be reviewed still. The data will always contain errors as this is raw data after all, but I will try to reduce the amount of errors in the recon archive where the highest wind data for a mission is listed. This will take time. I am also going to be redesigning some things in the recon archive and eventually around the site.

Some mission data has not been added. Most historical NOAA missions are not available because the raw data from the missions are not available in a World Meteorological Organization (WMO) format. For example, one file will contain observations every second from the plane, or every 30 seconds perhaps, but our site does not decode these files. It would be a lot of work to do so and it would never work perfectly because there would not be other data, like vortex messages. I could convert dropsonde files to a WMO format but I would rather not get involved with creating files that don't currently exist.

In order to add some data from 1989 to 1991, I had to add another decoder. The recon decoder now decodes URNT50 files. The decoder still doesn't decode the older supplementary vortex format. (so those messages will not appear in the archive) I'm not sure if I will ever make a decoder for that.

Some mission data has not been added. Most historical NOAA missions are not available because the raw data from the missions are not available in a World Meteorological Organization (WMO) format. For example, one file will contain observations every second from the plane, or every 30 seconds perhaps, but our site does not decode these files. It would be a lot of work to do so and it would never work perfectly because there would not be other data, like vortex messages. I could convert dropsonde files to a WMO format but I would rather not get involved with creating files that don't currently exist.

In order to add some data from 1989 to 1991, I had to add another decoder. The recon decoder now decodes URNT50 files. The decoder still doesn't decode the older supplementary vortex format. (so those messages will not appear in the archive) I'm not sure if I will ever make a decoder for that.

September 9th, 2011

I decided to start making some various changes around the site. I'm not sure how far I will take the redesign yet, but you may notice that the look of some things might change.

One change I have made is to make the new model system in beta testing the primary model system. The old system is still available, but is less prominent now. You can still access the old system here. (no longer available)

One change I have made is to make the new model system in beta testing the primary model system. The old system is still available, but is less prominent now. You can still access the old system here. (no longer available)

August 9th, 2011

July 24th, 2011

All of the data has been reprocessed and added back.

July 23rd, 2011 - Update 2

All of the data has been reprocessed and the upload will begin overnight tonight and possibly into the overnight hours of Sunday/Monday. I added back 2011's data.

July 23rd, 2011 - Update 1

Some small changes and small errors are requiring me to recreate all the data in the best track and model archive in beta testing. I expect that this will be complete by the morning of the 25th. Rather than try and fix the errors I decided to simply reprocess all the data.

Work on the origin feature and files with every storm included continue. That might be delayed about two weeks though while I work on some other projects.

Work on the origin feature and files with every storm included continue. That might be delayed about two weeks though while I work on some other projects.

July 18th, 2011

The live best track system in beta testing had a lot of issues on the evening of the 17th. A whole lot. At first I couldn't figure it out. After awhile I realized that for some unknown reason the file in the tcweb directory of the ATCF system for Bret was disappearing. That was something our system was not prepared for. 98L kept coming back and when the file was not there for 02L it went away. I think I have now fixed that problem. The system should now bypass checking for data on a storm that is active but is not in the tcweb directory listing. The storm will be frozen in time. While the system can't find the best track file it will not download model data. As for the invest number coming back I have something in place now to stop accessing it when this circumstance occurs. It all worked in testing.

Another issue is that when 98L was upgraded to 02L, the development was still noted as a disturbance. That was what was still listed. From now on however, when something has a name of a depression but has not yet had any development that is not a depression, storm, or hurricane, I will call it a depression. That should take care of that issue.

And now back to what I was creating previously.

Another issue is that when 98L was upgraded to 02L, the development was still noted as a disturbance. That was what was still listed. From now on however, when something has a name of a depression but has not yet had any development that is not a depression, storm, or hurricane, I will call it a depression. That should take care of that issue.

And now back to what I was creating previously.

July 16th, 2011

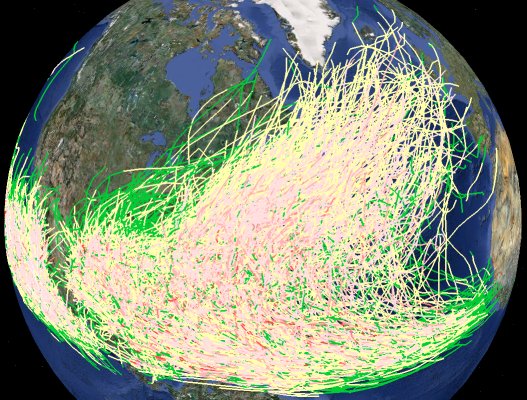

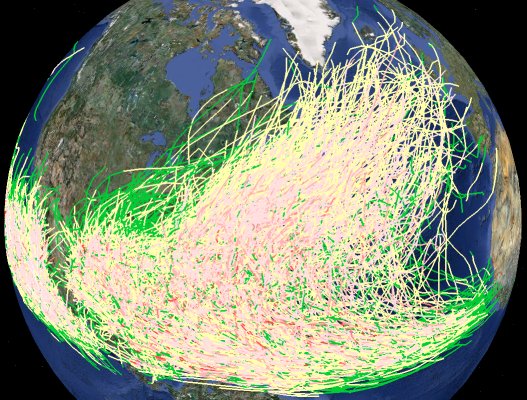

I have worked further on creating Google Earth files for all storm tracks. It is still not online yet. I have created versions for everything depression or higher, tropical storm+, cat 1+, cat 2+, cat3+, cat4+ and cat 5. Now I have started working on Google Earth files for origin points and then also a file with statistics on storms in history that can be accessed later for various purposes that can be anything from a table to sort through the most powerful storms or table of the formation date of the first (second, etc) storm of the season and even different charts, such as named storms per year. Once I get the origin points done I may go ahead and put the files that were created online. A single script creates all this data on demand, not in real time. I have yet to make a final decision on when it will run. I might actually have it run when a depression or higher storm ends and have it run just for that basin. I might also make it available to be run by an adminstrator or even the general public every so often. I also forgot to mention that my site's distance calculator has been integrated into the new best track and model system. It allows someone to see how far a storm is away from them.

July 14th, 2011

I have continued the code cleanup in data.cgi. I have not done anything in the main script which generates the data by checking the ATCF system yet. I instead decided to test some new things. The image below is a screenshot from Google Earth of offline testing where I create a file with every depression or higher in each basin. I am continuing that testing offline and perhaps in a few weeks I will have it online. I plan on adding one other thing too. I want to make a file that shows just the origin points of storms. I will probably have several versions of that, like one for including depressions and one for tropical storms. (could maybe do one for just hurricanes and maybe for each category too) I still need to think about it. I also might do storm track version files that also feature just a certain type of storm. I also think I will create yearly track file as well for each basin.

I was surprised by the low file size of the Google Earth files that you see in the screenshot below. 570KB kmz file for Atlantic, 275KB for East Pacific and 20KB for Central Pacific. That's KMZ files, which are zipped KML files. The KML files were 15MB, 7.5MB and 500KB respectively.

These new features in development will probably work differently than other things on the site which will take some thinking on my part. These files take a lot of resources to create. That means they will not be created constantly. For example, for a current storm, it will probably not appear in the file. I may have a utility that runs every week and does storms that are no longer active. Or I might have it so someone could activate it on demand, but only allow it to run every week. The file with every storm in history might be limited to being created once a month. Still need to think about it all.

I was surprised by the low file size of the Google Earth files that you see in the screenshot below. 570KB kmz file for Atlantic, 275KB for East Pacific and 20KB for Central Pacific. That's KMZ files, which are zipped KML files. The KML files were 15MB, 7.5MB and 500KB respectively.

These new features in development will probably work differently than other things on the site which will take some thinking on my part. These files take a lot of resources to create. That means they will not be created constantly. For example, for a current storm, it will probably not appear in the file. I may have a utility that runs every week and does storms that are no longer active. Or I might have it so someone could activate it on demand, but only allow it to run every week. The file with every storm in history might be limited to being created once a month. Still need to think about it all.

July 12th, 2011

I have split data.cgi into a lot of different scripts so it is now much more efficient than it was. (From always loading 350KB+ to now 30KB plus the script it actually needs to load.) There is no visible change to end users other than perhaps noticing the web based archive loads quicker. I still have a lot of code cleanup to do though and I will continue to make it work better. I also really need to cleanup the main script which generates the data by checking the ATCF system. That script is about 300KB. That is rather large because it has to be, there is a lot going on. However, at this point it has a lot of code that is needed to generate archived data. I think I will work on splitting everything I can that does that out of the script so that it is only loaded when someone generates archived data. That would make the script work a lot better.

July 11th, 2011 - Update 4

It's done. Over a quarter of a million files and about 3 gigabytes of data. At this point I will begin code cleanup and splitting the data.cgi script to run better. Then I can look into ideas on what I can do further with the data now that I have all of it available. If you see any problems in the archive let me know. There is a massive amount of data so I am unable to go through it.

July 11th, 2011 - Update 3

Today I let my computer process the rest of the data and I have begun uploading the last of the data. Based on the rate of upload, it should be done late tonight if things continue to go well.

July 11th, 2011 - Update 2

Things have gone very well this morning. I have uploaded everything except 2003 through 2009. Recent years take a very long time to process and upload. Tonight I will start on the rest. I should have the rest done likely by Tuesday night if things continue to go well.

July 11th, 2011 - Update 1

After considering one other feature I decided not to add I have again started processing older data. Everything processed so far, through 1980, went well. The script generated the proper notes for things that were different from the storm table file in the ATCF database. If things go well, and I could always find something that needs to be corrected, all the data could be added by Tuesday evening. Don't count on it, but we'll see. There are hundreds of thousands of files to be created and uploaded.

July 10th, 2011

I have updated all the scripts online and made Calvin's older data unavailable. I had to do that to update the script.

Unfortunately I came up with one more thing I needed in one of the behind the scenes files. That meant everything I did today, trying to upload all data from 1851 - present, was just rendered useless. All the data uploaded was deleted. I'm not going to set a timetable for readding everything. However, based upon what I did today I see that it would take about two days to process and upload all the data. Before that however, I need to add the one thing I forgot. Additionally, before I process the data again I am going to make sure all the data is ready before I upload. There were about a few dozen subtropical storms and a few other storms that need some slight adjustments in the script before I process the data. I don't want to have to interupt the process again so I am going to really make sure I am ready next time.

Unfortunately I came up with one more thing I needed in one of the behind the scenes files. That meant everything I did today, trying to upload all data from 1851 - present, was just rendered useless. All the data uploaded was deleted. I'm not going to set a timetable for readding everything. However, based upon what I did today I see that it would take about two days to process and upload all the data. Before that however, I need to add the one thing I forgot. Additionally, before I process the data again I am going to make sure all the data is ready before I upload. There were about a few dozen subtropical storms and a few other storms that need some slight adjustments in the script before I process the data. I don't want to have to interupt the process again so I am going to really make sure I am ready next time.

July 9th, 2011 - Update 1

Offline testing has continued to go very well. The last minor new feature I planned to add has been added. None of the recent changes are online yet. I am going to try to wait until Calvin weakens. I'll probably go ahead and update the system even with it active and just hide the older model data that I recreate for it. I made a lot of changes to the back end of the system and I will have to recreate all the old data again. This might be all the changes I make to the back end of the system. Tonight I am going to start further indepth testing of older data. I have done a lot of testing so far and it seems to have went well. I may start adding older archived data as soon as early next week. (Combined, over 2600 storms in the Atlantic, East Pacific, and Central Pacific, not including invests) There is one thing I also want to do but I don't have to do that now and that is allow invest data to be added from the "store_invest" folder. I haven't done that yet so older invests can't be easily added yet.

I tested offline creating data from 1851 through 1953 this morning and it created successfully. That's easy though, no model data until 1954. Later tonight I'll start testing more and more years with actual model data.

I tested offline creating data from 1851 through 1953 this morning and it created successfully. That's easy though, no model data until 1954. Later tonight I'll start testing more and more years with actual model data.

July 6th, 2011

Work continues offline on making sure older data is displayed in the best way possible. It's going well. I keep coming up with slight tweaks for older data though. There is a lot of things to consider because older data has less information available and some of that information I have previously counted on having, like the level of development. I am also working on using the file storm.table as well, in the archive folder of the ATCF system, which has a list of all old storms. I plan on using that to get storm names and the highest level of development when I can't get that from the best track or model file. After that, the one major thing I was thinking about doing is too complex, at least right now, so that leaves a few minor actual additions that I can think of at the moment before the system will be ready to generate older data. Within a few weeks I might have all the archived data available.

July 4th, 2011

This morning I completed the update that should allow data before 1970. I wanted to get the update live to start testing it on the live system as it changed the file structure again of some of the files. The system might be a lot less stable until the bugs get worked out. I tested some data for various old years and things went well. I have removed the old data from the live system I was testing. I wanted to check for any bugs once online. I will continue offline testing. Next I will see what other features I may want to add. After any new features I want to add are added I will then process and add all old data, from 1851 to present. I'm not sure when that might occur. At that point I will probably have a new range of features that I could add given all of the historical data to work with.

July 3rd, 2011

Work began yesterday evening on handling data prior to 1970. It is going very well. I think I already finished the changes to the main script. Now I have to adapt the web based archive. None of these changes are live yet. The model error feature already worked for all years in which model data was available which was great. A negative unix time is created for older data and it works using that. There is still a a lot of changes though. I am once again changing the file structure and I am changing how time is calculated. (from a unix time stamp back to the original 10 character time code I get from best track and model files.)

I may be ready with the update within a day or two. There are a lot of changes so it will make the system more unstable when I apply the update until the bugs are worked out.

I may be ready with the update within a day or two. There are a lot of changes so it will make the system more unstable when I apply the update until the bugs are worked out.

July 2nd, 2011

A significant update occurred to the new best track and model system in beta testing today. There are few changes you will notice visibly. The update changed the file structure of things and could bring about some new bugs. I have still not updated the script to allow archived data prior to 1970. That will be an extremely significant update if I do that.

All older data was deleted as it would no longer work with this update. I have however recreated all data for this year. If someone wants older data, let me know and I will still create some of the old data for historic storms. I will eventually add all data from now through 1970. I still have more features I would like to explore before I do all of that though.

Among the updates: Past track in Google Maps. (And options on how to show it.) Extra data in Google Maps and Google Earth for model data, when available. (Not simple wind or sea swath text data though.) Show best track storm position in Google Maps. Change storm icon size in Google Maps. Show actual coordinates for a model point in Google Earth info window. Model data that does not have intensity for the "0" hour should not have intensity available for the other hourly forecast points. (Fixes a problem with a few track models that had completely incorrect intensity values.) Added new model names for 2011. Model names now appear in Google Earth for models that were created in historical mode. Numerous other updates to the file structure behind the scenes. (Eventually, some of the additional text data that appears now in Google Maps and Google Earth may appear on the model text page in the web interface.)

All older data was deleted as it would no longer work with this update. I have however recreated all data for this year. If someone wants older data, let me know and I will still create some of the old data for historic storms. I will eventually add all data from now through 1970. I still have more features I would like to explore before I do all of that though.

Among the updates: Past track in Google Maps. (And options on how to show it.) Extra data in Google Maps and Google Earth for model data, when available. (Not simple wind or sea swath text data though.) Show best track storm position in Google Maps. Change storm icon size in Google Maps. Show actual coordinates for a model point in Google Earth info window. Model data that does not have intensity for the "0" hour should not have intensity available for the other hourly forecast points. (Fixes a problem with a few track models that had completely incorrect intensity values.) Added new model names for 2011. Model names now appear in Google Earth for models that were created in historical mode. Numerous other updates to the file structure behind the scenes. (Eventually, some of the additional text data that appears now in Google Maps and Google Earth may appear on the model text page in the web interface.)

July 1st, 2011

I think I have completed the past track feature I was working on for Google Maps. I might add to it in the future though. I have not yet made the change live though. The change will require that I delete all old data. I will probably do that this evening and I will recreate all of 2011's data and add it. Extra text model data now appears in both Google Maps and Google Earth, when available. However, I still don't add the text wind swath model data in Google Maps or even the main Google Earth file. You still have to go to the separate Google Earth wind swath file, when available, for that. I definitely did not want to include it in the Google Maps version because it added too much to the file size of the XML file that was loaded.

June 30th, 2011

Our site yesterday switched data sources for gathering the lastest recon because our site was not working well for some reason with the previous data source. Our site was not updating every time it should when the previous data source updated. The system may work better or worse now in terms of how fast it updates. Please use our "Add an Observation" feature if our site is not properly adding data. A bug was fixed two days ago that was preventing that from working properly. The recon system now contacts a NOAA FTP server. We'll see how that goes. I think my site was not always contact the previous NOAA site I was using because it didn't think the page would update that frequently so it saved a cache. The new NOAA server I contact might not always be as easy to contact but seems like it updates slightly faster.

I also made a few small changes to the recon system to accept some West Pacific observations into the decoder when the aircraft is an Astra jet.

Progress on adding some extra text data for models in the best track and model system in beta testing is going well.

I also made a few small changes to the recon system to accept some West Pacific observations into the decoder when the aircraft is an Astra jet.

Progress on adding some extra text data for models in the best track and model system in beta testing is going well.

June 27th, 2011

I was sick for a few days so I didn't get too much done. I'm back to working on the past best track for Google Maps. I also want to add some extra CARQ data and other extra model data, when available, and I haven't done that part yet. I'll probably hold off on making these updates live. I am making a lot of changes to the system behind the scenes. While most are not features that would be noticeable to end users, the changes would make the older data I have in the system now not work.

June 23rd, 2011

I plan on making a lot of tweaks to the model and best track system in beta testing. I am not ready to make them live yet however. I will have to clear all the model data in the archive to do it. I am changing some of the file structure on some of the files which will require data to be recreated. Once I decide upon the file structure I might make the changes live even though the small tweaks may not be implemented so that end users can see them yet. The one change of real note will be the addition of the past best track in the Google Maps model plots. I have been meaning to do that for a long time. I also plan on having a little more data available for the center position as well and maybe for the OFCL model as well.

A reminder that our live recon system allows observations to be added into it by submitting the link to the ob in the NHC's web based recon directory. I made an update to it today that has not been tested live yet. You can now add obs from the past 24 hours as long as the storm directory on our site has not been moved and you are not replacing a previous observation. For the East Pacific storm Beatriz our site (Tropical Globe) missed several obs but no one added them. Our site updates only at certain intervals and sometimes misses data. Setting this update interval too frequently puts extra load on our site and NOAA's server which is why our site sometimes misses obs. I don't follow every mission live so someone who does can add the obs for us and get the benefit of having a complete mission map more rapidly.

A reminder that our live recon system allows observations to be added into it by submitting the link to the ob in the NHC's web based recon directory. I made an update to it today that has not been tested live yet. You can now add obs from the past 24 hours as long as the storm directory on our site has not been moved and you are not replacing a previous observation. For the East Pacific storm Beatriz our site (Tropical Globe) missed several obs but no one added them. Our site updates only at certain intervals and sometimes misses data. Setting this update interval too frequently puts extra load on our site and NOAA's server which is why our site sometimes misses obs. I don't follow every mission live so someone who does can add the obs for us and get the benefit of having a complete mission map more rapidly.

June 21st, 2011

For the past week and a half I got sidetracked on something else but now I'm returning back to working on the model and best track archive.

Someone asked for 1992, 2004, and 2005 Atlantic data so I added it the archive. I will still recreate all that in the future when I make some further tweaks to the system. (One small new feature you may notice is the timeline feature on each yearly archive page.) The list of things to do keeps growing, so the availability of releasing the older data from now through 1970 may be a month away. (And longer for data earlier than that due to the problem mentioned in the last site update.) Recreating the data now and then again when I finish making the updates is not worth it. That is a massive amount of data to upload. But, if you have some specific data you would like to see, let me know.

I realized that our site recently turned five years old. It doesn't seem like it is that old, but I'm not sure why, because thousands of hours have been spent coding the site since its start. On June 11, 2006 I bought this domain. At the time the site was one page full of links. (A look back at our one page site on June 13, 2006. I lost the original logo, I think it had some extra white space, but the one on the page probably appeared a few days later.) About a month later we started offering model plots in Google Maps and Google Earth and later in 2006 had an early version of our recon decoder that only decoded HDOB messages.

Someone asked for 1992, 2004, and 2005 Atlantic data so I added it the archive. I will still recreate all that in the future when I make some further tweaks to the system. (One small new feature you may notice is the timeline feature on each yearly archive page.) The list of things to do keeps growing, so the availability of releasing the older data from now through 1970 may be a month away. (And longer for data earlier than that due to the problem mentioned in the last site update.) Recreating the data now and then again when I finish making the updates is not worth it. That is a massive amount of data to upload. But, if you have some specific data you would like to see, let me know.

I realized that our site recently turned five years old. It doesn't seem like it is that old, but I'm not sure why, because thousands of hours have been spent coding the site since its start. On June 11, 2006 I bought this domain. At the time the site was one page full of links. (A look back at our one page site on June 13, 2006. I lost the original logo, I think it had some extra white space, but the one on the page probably appeared a few days later.) About a month later we started offering model plots in Google Maps and Google Earth and later in 2006 had an early version of our recon decoder that only decoded HDOB messages.

June 10th, 2011

You may have noticed that older data in the new best track and model archive, currently in beta testing, has been removed. I added a new line in a file and removed a line in another and as a result of the first change I need to recreate that old data. That is simple to do. However, before I do I plan on making some updates to the system. I have been making a lot of changes to the system in the past week and I probably have a lot more changes to go. The system seems to work good for current storms but I am in the process of getting ready to process all historical model and best track data for release on my site. The best track file format was slightly different a few decades ago so I had to adjust the system for that. That seems to have went well. However, in order to process data from 1850 to 1969 I need to make some significant changes to the system because my script relies a lot on getting the time by counting the number of seconds from 1970. (Unix time stamp) Unfortunately, that means I can't process data earlier than that until I come up with a new way to handle time in my script for data prior to 1970. (Based on the new time line feature I added to the model archive I got some ideas on how I might do that.) I'm also preparing to add a little more model text data for CARQ and OFCL because there is a little bit of extra data like storm speed and direction sometimes that I do not currently grab from the model file. Meanwhile, testing is going well on data from 1970 to now. I keep making some improvements to the system so I decided to hold off a bit longer before releasing all of that historical data publicly in case I add any new features that would require me to reprocess all that data again. (It will probably create a few hundred thousand files for the archive) I might recreate the old data before I do all the possible new features only because some of the new features may take awhile to implement or I might decide against some of them in the end. I do have some interesting ideas that I'll consider over the new few months. -- Update 1: A total of four new lines have been added to one of the files in the system which required another manual update. They new lines will have some extra CARQ and OFCL model data. I have not implemented it yet, I just wanted the system to start creating blank lines so they can be read when the update is implemented. It might be a week or more since I want to make some other updates as well. -- Update 2: Now there are five extra lines I added.

June 2nd, 2011

I think the new best track system is good enough to be upgraded from alpha testing to beta testing. The system has had some bugs that I corrected in the past 24 hours but given how well the system did during the testing in other aspects I have decided to upgrade its development status. There is still one bug where old raw data does not always delete when a storm is no longer active, but that is on the administrative end. I am still making lots of tweaks to the system here and there. I also plan to integrate my site's distance calculator into this system so people can see how far a storm is away from them right inside the new system. A lot of the bugs that I found over the past 24 hours were for generating historical data. Eventually I will likely add all old model data into the system so that there is a complete archive for those who wish to research the model and best track data. I might still need to work out a few kinks in that. The model error feature is now stable enough that I have removed the notice not to use it. It is very important that people understand how that works before ever using it though. I reread the product description page and tried to make some things a little clearer. I know it could still use some work but the model error feature is complex to explain but it is something important that people need to understand before using it which is why the product description is so wordy.

June 1st, 2011

Our site has now resumed normal operations for the hurricane season... for real this time. I forgot to set the decoders to update for the four main recon products. Everything should now be working properly.

I have yet to check to make sure all the links on the site work. Each season I do that since sites sometimes move. I'll get to that soon.

I have made a product update to how I handle wind direction. Here is the old way I did things:

349°-10°: the N

11°: between N and NNE

12°-32°: the NNE

33°: between the NNE and NE

34°-55°: the NE

56°: between the NE and ENE

57°-77°: the ENE

78°: between the ENE and E

79°-100°: the E

101°: between the E and ESE

102°-122°: the ESE

123°: between the ESE and SE

124°-145°: the SE

146°: between the SE and SSE

147°-167°: the SSE

168°: between the SSE and S

169°-190°: the S

191°: between the S and SSW

192°-212°: the SSW

213°: between the SSW and SW

214°-235°: the SW

236°: between the SW and WSW

237°-257°: the WSW

258°: between the WSW and W

259°-280°: the W

281°: between the W and WNW

282°-302°: the WNW

303°: between the WNW and NW

304°-325°: the NW

326°: between the NW and NNW

327°-347°: the NNW

348°: between the NNW and N

You can find the new way here. The mathematical separations between cardinal directions that end in .75 should be rounded up rather down, which is what I had done previously, when it comes to reporting something that is between the sixteen cardinal directions this site uses. Previously, our site reported NNE/NE when something was 33 degrees. The actual mathematical separation occurs at 33.75 degrees. Our site will now report NNE/NE for the whole number 34 degrees. Because this change is so minor, the recon archive will not be updated for messages that have already been processed. As mentioned previously on this site about how cardinal direction is handled, it is subject to being changed in the future. I still don't know of an official way to handle things which is why it remains subject to change. This change has been made, effective immediately, across all site systems on Tropical Atlantic and Tropical Globe.

I have yet to check to make sure all the links on the site work. Each season I do that since sites sometimes move. I'll get to that soon.

I have made a product update to how I handle wind direction. Here is the old way I did things:

349°-10°: the N

11°: between N and NNE

12°-32°: the NNE

33°: between the NNE and NE

34°-55°: the NE

56°: between the NE and ENE

57°-77°: the ENE

78°: between the ENE and E

79°-100°: the E

101°: between the E and ESE

102°-122°: the ESE

123°: between the ESE and SE

124°-145°: the SE

146°: between the SE and SSE

147°-167°: the SSE

168°: between the SSE and S

169°-190°: the S

191°: between the S and SSW

192°-212°: the SSW

213°: between the SSW and SW

214°-235°: the SW

236°: between the SW and WSW

237°-257°: the WSW

258°: between the WSW and W

259°-280°: the W

281°: between the W and WNW

282°-302°: the WNW

303°: between the WNW and NW

304°-325°: the NW

326°: between the NW and NNW

327°-347°: the NNW

348°: between the NNW and N

You can find the new way here. The mathematical separations between cardinal directions that end in .75 should be rounded up rather down, which is what I had done previously, when it comes to reporting something that is between the sixteen cardinal directions this site uses. Previously, our site reported NNE/NE when something was 33 degrees. The actual mathematical separation occurs at 33.75 degrees. Our site will now report NNE/NE for the whole number 34 degrees. Because this change is so minor, the recon archive will not be updated for messages that have already been processed. As mentioned previously on this site about how cardinal direction is handled, it is subject to being changed in the future. I still don't know of an official way to handle things which is why it remains subject to change. This change has been made, effective immediately, across all site systems on Tropical Atlantic and Tropical Globe.

May 23rd, 2011

Our site has now resumed normal operations for the hurricane season.

The NHC's "tcweb" directory has not been updating for 92L and 90E. Our site has some backup methods in place to attempt to handle this. However, it doesn't work well at the start of the season for the new system in alpha testing. The old system looks at the "aidpublic" folder as its method of determining what is available. That is better in some respects. However, the new system looks at the "tcweb" folder instead as its focus so it can organize everything, even if there is no model data. As a backup, the "btk" folder is checked in some cases. If nothing is active in the "tcweb" folder in 8 hours then the new system will check the "btk" folder if something has been active in the "tcweb" folder or "btk" folder in the past 24 hours. If not, then the system assumes it is a slow period and it skips checking the "btk" folder. This did not work today because it is the offseason and nothing occurred in the last 24 hours. I could check the "btk" folder more often automatically, but I would rather not put even more strain on the NOAA server to do that since it is an error that is unlikely to occur to often when things really matter. Today, I designed something that will now allow me to manually run the system using the "btk" folder very easily and after that it will continue to check the "btk" folder as needed until nothing happens for 24 hours.

At some point the old model system will be turned off once the new system is well tested. Last year I had planned on doing that at the start of this year's season but I am going to see how the new system works as the season progresses. I still consider the new system to be in alpha testing, below beta testing, so it may not be until next season until the old system is turned off. When available, the new system offers more data and more options that make that system much better than the old one.

The Atlantic and East/Central Pacific recon systems remain turned on. If things gets very busy in the Atlantic, the East/Central Pacific recon system may be turned off to save resources.

I don't have any new features planned for this year. I plan on testing the new model system and making sure the model error works as expected. I also need to revisit the help page about model error and improve it. (along with some tweaks here and there that make it more clear that model error data can be misleading at times) I might make little improvements here and there throughout the site, but I don't have anything major planned. I eventually want to do NHC advisory data in Google Earth, and have had that system sitting around for many years now, but I need to do the error cone and that is what always held me up. I have been working on an e-commerce venture at the moment and so NHC advisory data will probably be something that doesn't show up until at least 2012.

The NHC's "tcweb" directory has not been updating for 92L and 90E. Our site has some backup methods in place to attempt to handle this. However, it doesn't work well at the start of the season for the new system in alpha testing. The old system looks at the "aidpublic" folder as its method of determining what is available. That is better in some respects. However, the new system looks at the "tcweb" folder instead as its focus so it can organize everything, even if there is no model data. As a backup, the "btk" folder is checked in some cases. If nothing is active in the "tcweb" folder in 8 hours then the new system will check the "btk" folder if something has been active in the "tcweb" folder or "btk" folder in the past 24 hours. If not, then the system assumes it is a slow period and it skips checking the "btk" folder. This did not work today because it is the offseason and nothing occurred in the last 24 hours. I could check the "btk" folder more often automatically, but I would rather not put even more strain on the NOAA server to do that since it is an error that is unlikely to occur to often when things really matter. Today, I designed something that will now allow me to manually run the system using the "btk" folder very easily and after that it will continue to check the "btk" folder as needed until nothing happens for 24 hours.

At some point the old model system will be turned off once the new system is well tested. Last year I had planned on doing that at the start of this year's season but I am going to see how the new system works as the season progresses. I still consider the new system to be in alpha testing, below beta testing, so it may not be until next season until the old system is turned off. When available, the new system offers more data and more options that make that system much better than the old one.

The Atlantic and East/Central Pacific recon systems remain turned on. If things gets very busy in the Atlantic, the East/Central Pacific recon system may be turned off to save resources.

I don't have any new features planned for this year. I plan on testing the new model system and making sure the model error works as expected. I also need to revisit the help page about model error and improve it. (along with some tweaks here and there that make it more clear that model error data can be misleading at times) I might make little improvements here and there throughout the site, but I don't have anything major planned. I eventually want to do NHC advisory data in Google Earth, and have had that system sitting around for many years now, but I need to do the error cone and that is what always held me up. I have been working on an e-commerce venture at the moment and so NHC advisory data will probably be something that doesn't show up until at least 2012.

February 4th, 2011

I often receive requests to turn on the Pacific recon system. I finally decided to turn on the East and Central Pacific live recon system on a trial basis, at least through some of the winter missions. I also updated the system to match the Atlantic system and West Pacific system. (the West Pacific system will still operate only when I am notified of storms)

I'll have to see how things go with two systems being live at the same time to see if I will continue the live system for the East and Central Pacific.

I'll have to see how things go with two systems being live at the same time to see if I will continue the live system for the East and Central Pacific.